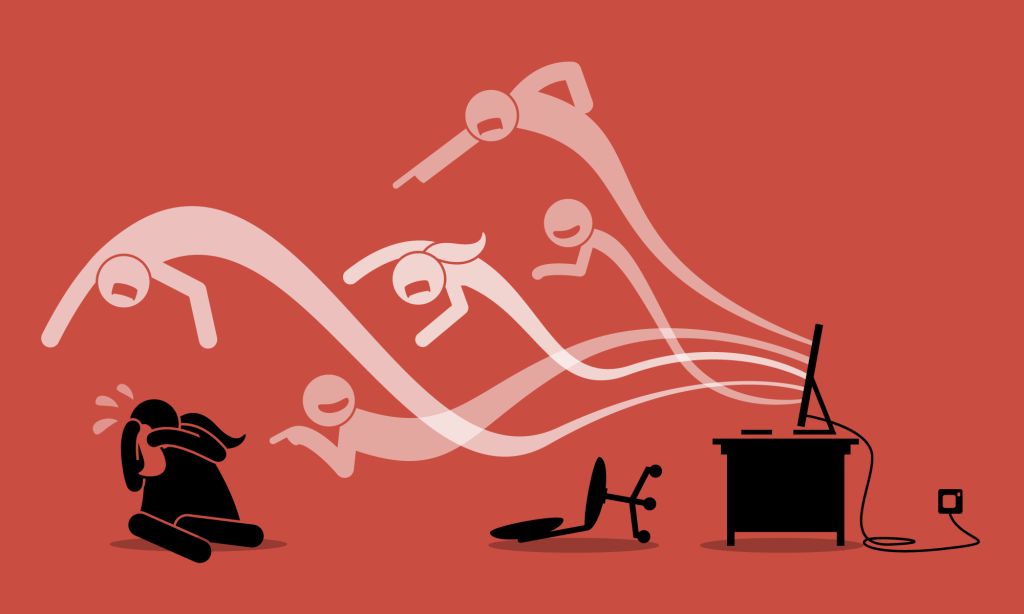

For decades, the internet has been celebrated as a space of boundless connection, democratized information, and limitless opportunity. But as digital platforms have increased, they have also revealed darker undercurrents of hostility, harassment, and organized hate campaigns that do not remain confined to the screen. Increasingly, evidence suggests that online hate speech is not merely a virtual problem. It spills into the offline world, shaping perceptions, instilling fear, and in some cases, inciting physical violence. This article aims to underscore the seriousness of the issue by exploring how words typed into keyboards transform into actions that wound individuals, destabilize societies, and erode digital safety.

One of the clearest indicators that online hate affects real lives is the psychological harm it causes. A growing body of research demonstrates that victims of online hate speech report heightened feelings of insecurity even in offline spaces. Digital attacks often follow victims beyond the confines of the social media platforms, infiltrating their daily routines, relationships, and mental health. The persistence of online hostility through posts that can be shared, screenshotted, or resurfaced at any moment magnifies the sense that there is no escape. Unlike a passing insult in the street, online hate is permanent, searchable, and public.

The permanence of online hate is compounded by the way it spreads. Digital platforms thrive on visibility and virality. Content that shocks or provokes is often rewarded with engagement, which in turn boosts its reach through recommendation systems. This means hateful content can travel far beyond the initial audience, exposing more people to harmful stereotypes or dehumanizing rhetoric. Scholars have argued that such amplification lowers the threshold for prejudice in society by making hostile views appear more common or socially acceptable. When hate is normalized online, it erodes the social stigma that once discouraged its open expression offline. What was once unsayable in polite society becomes memeable, retweetable, and, eventually, repeatable in physical interactions.

The consequences of normalization are profound. Dehumanizing language, when repeated and amplified, can condition audiences to view targeted groups as dangerous, unworthy, or subhuman. Psychological studies show that exposure to hate speech not only reduces empathy toward marginalized groups but also alters cognitive processing, making discriminatory behavior more likely. In practice, this means that an individual who repeatedly consumes racist, xenophobic, or misogynistic content online may be less inclined to view members of those groups as deserving of dignity and protection in real-world encounters. Such erosion of empathy can pave the way for verbal harassment in public, discriminatory hiring decisions, biased policing, or even violent attacks.

The link between online hate and offline violence becomes especially clear in the aftermath of major social or political events. Following politically charged incidents, spikes in online hate speech faced by women politicians are often documented, sometimes accompanied by rises in hate crimes. The link between digital speech and real-world behavior underscores the fact that the boundary between online and offline is porous. What begins as a hashtag or meme can embolden individuals to act out prejudice in polling stations, streets, schools, workplaces, or homes.

Solutions to Curbing Online Hate and Preventing it from Transferring Offline

Solutions to online hate speech must operate at multiple levels. At the platform level, stronger content moderation policies are crucial. Companies need to enforce bans on hate speech consistently and transparently, rather than leaving harmful content online because it drives clicks and advertising revenue. At the community level, counter-speech campaigns have shown promise. When hateful narratives are met with facts, empathy to the victims, the spread of hate can be countered. Civil society groups around the world have pioneered creative ways of reclaiming hashtags, challenging stereotypes, and amplifying the voices of marginalized groups to shift the narrative of online hate discourse.

At the policy level, governments face the delicate task of balancing freedom of expression with protection from harm. Overly broad censorship risks silencing legitimate dissent, while lax regulation enables online hate and offline violence to go unpunished. Some regions, such as the European Union, have implemented codes of conduct and the Digital Services Act, which grants the European Commission authority to enforce content moderation by large online platforms, which compels platforms to act more responsibly. Other countries are exploring early warning systems that monitor spikes in hate speech during moments of social tension, enabling faster interventions before violence erupts.

In conclusion, addressing the pathways from online hate to offline violence requires recognizing that the digital sphere is not separate from the offline world. The harms are interconnected, reinforcing, and mutually escalating. Words on a screen can indeed wound, not only psychologically but socially and physically. If left unchecked, online hate corrodes the foundations of pluralistic societies, where diversity and dignity should flourish and be protected rather than attacked. Protecting people from harm means treating digital hostility with the seriousness it deserves, not as a virtual nuisance, but as a public safety and human rights issue that demands urgent attention by all stakeholders.

By Patricia Namakula